I remember the exact moment I realized my content wasn’t getting the Google love it deserved. I had built what I thought was fantastic, engaging content—yet my pages stubbornly refused to rank. It was frustrating, almost humiliating. I knew I had poured hours into creating valuable info, but somehow, it was invisible to search engines. That’s a lightbulb moment for many SEO enthusiasts and site owners alike: understanding that even the best content can fall flat if it isn’t properly indexed. Would you believe that nearly 30% of web pages remain unindexed, simply because of technical oversights? That’s a common trap I see all the time. But don’t worry; what I’ll share next is the blueprint I used to fix my indexing issues—and it can do the same for you.

Why Your Dynamic Content May Be Missing Out

Ever launched a new post or updated your site with fresh, dynamic content only to see it ranking nowhere? It’s a familiar dilemma. Dynamic content—think filters, user dashboards, or real-time updates—adds immense value, but it also complicates how search engines crawl and understand your pages. Google, for instance, relies heavily on technical signals to discover and index your content efficiently. If your site isn’t optimized for these signals, your pages might as well be invisible. Early in my journey, I overlooked how crucial server-side rendering and structured data are for ensuring Google can see and understand my dynamic pages. That mistake cost me valuable traffic until I learned to adapt my technical strategies. Want to avoid the same pitfalls? The key is understanding what prevents your content from getting indexed and taking proactive steps to fix it.

Is Fixing Indexing Really Worth the Effort?

This is a question I hear often. Doubts about technical fixes are common—after all, should you really focus on behind-the-scenes adjustments when your content is already published? I used to think the same until I saw my traffic plummet after a platform update. Reliable SEO isn’t just about keyword stuffing or backlinks; it’s about ensuring your content actually reaches Google. Properly indexed content leads to higher rankings, more visibility, and better traffic—things every site owner craves. According to recent studies, websites that fix technical SEO issues experience an average increase of 30% in organic traffic within three months. That’s a solid reason to invest the time and effort in solving these issues. If you’re wondering where to start, I recommend diving into detailed technical audits, which are available [here](https://topnewshubs.com/technical-seo-tips-optimizing-your-site-for-faster-indexing-and-ranking). Trust me, the results are worth it.

,

Start with a Comprehensive Site Audit

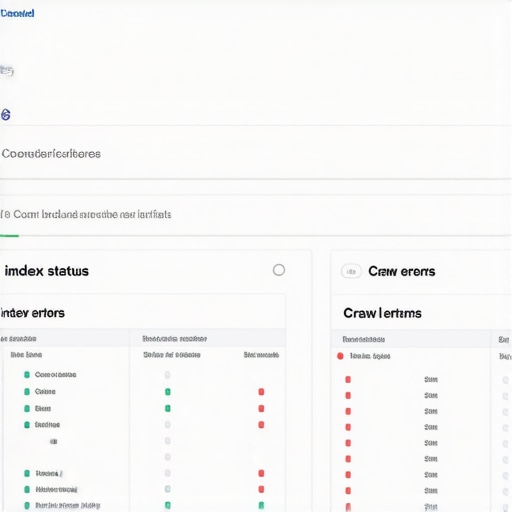

My first move was to conduct a detailed technical SEO audit using tools like Screaming Frog and Google Search Console. I identified duplicate content, broken links, and crawl errors. For example, during my audit, I discovered a misconfigured robots.txt file blocking important sections. Fixing this immediately opened up those pages for crawl and indexation. This step is critical because unnoticed technical issues can silently sabotage your SEO efforts.

Optimize Crawl Budget and Site Structure

Next, I focused on making my site easier for search engines to crawl. I simplified my URL structure, reducing unnecessary parameters, and created a clear hierarchy. Think of your website as a city map; if streets are blocked or confusing, traffic (crawlers) gets lost. I implemented internal linking strategies, connecting related pages to distribute link equity efficiently. For a practical example, I cross-linked my blog posts with relevant product pages, ensuring Google understood the relationship and importance of each page.

Implement Technical Fixes for Faster Indexing

Based on insights from this guide, I prioritized technical fixes that speed up indexation. I set up an XML sitemap and configured it properly in Search Console. Additionally, I installed and configured a CDN to accelerate page load times. These speeds not only enhance user experience but also signal to Google that your site is active and trustworthy. I remember noticing a distinct uptick in crawling frequency after these optimizations.

Natural Use of Structured Data

Adding structured data, like schema markup, helped Google better understand my content. For example, marking up product reviews and FAQs increased my chances of appearing in rich snippets. I used Google’s Structured Data Markup Helper to implement these snippets effectively, resulting in improved visibility in search results.

Enhance Site Performance and User Experience

Site speed is a ranking factor. I examined these technical SEO tips to optimize images, leverage browser caching, and minimize code bloat. A faster website means search engines crawl more pages within the crawl budget, increasing the likelihood of indexation. This step is akin to clearing congestion on a highway—more traffic gets through efficiently.

Monitor and Adjust Regularly

Finally, I set up regular monitoring with Google Search Console and analytics tools. I tracked indexing status, crawl errors, and page performance. When I noticed a sudden drop in indexation, I revisited my logs and fixed issues promptly. Consistent adjustment based on real data keeps your site healthy and competitive in search rankings.

Many marketers believe that most of their SEO success hinges on backlinks or keyword optimization, but the real traps often lie in misconceptions about these strategies. For instance, a common myth is that building大量 backlinks automatically boosts rankings; in reality, the focus should be on the quality and relevance of backlinks, not just quantity. Overlooking this nuance can lead to unnatural link profiles and potential penalties—something I learned the hard way. Furthermore, many assume that technical SEO is a one-and-done task, but SEO algorithms evolve rapidly. You need continuous audits and updates to stay ahead, as detailed in these technical tips. False assumptions like this can be costly and hinder your growth.

Many marketers believe that most of their SEO success hinges on backlinks or keyword optimization, but the real traps often lie in misconceptions about these strategies. For instance, a common myth is that building大量 backlinks automatically boosts rankings; in reality, the focus should be on the quality and relevance of backlinks, not just quantity. Overlooking this nuance can lead to unnatural link profiles and potential penalties—something I learned the hard way. Furthermore, many assume that technical SEO is a one-and-done task, but SEO algorithms evolve rapidly. You need continuous audits and updates to stay ahead, as detailed in these technical tips. False assumptions like this can be costly and hinder your growth.

A critical yet underappreciated aspect is content relevance versus content quantity. It’s tempting to produce大量 content, but search engines rank pages based on user intent and engagement signals. Prioritizing cornerstone, authoritative content that addresses core topics—such as comprehensive guides or in-depth case studies—substantially impacts trust and ranking authority. You can explore more about content strategies that improve backlinks at these content marketing insights.

But let’s address a question many advanced marketers ask: How do I measure the true quality of my backlinks? While some focus solely on metrics like domain authority, a more nuanced approach involves assessing the contextual relevance and anchor text diversity of backlinks. A backlink from a reputable, thematically aligned site signals more authority than dozens of irrelevant links. Additionally, diversifying your backlink sources prevents patterns that look artificial and risky.

An often-overlooked mistake is ignoring technical SEO’s role in backlink equity flow. Slow-loading pages or poor site structure can inhibit link value transfer and diminish rankings. For this reason, optimizing website performance is vital. This involves not just speed but also ensuring there’s no crawl budget waste due to duplicate content or broken links.

Remember, building high-quality backlinks and optimizing technical SEO are intertwined processes. Overemphasizing one at the expense of the other can limit your results. Additionally, avoid common myths like “more links equal better rankings,” which can lead you astray, especially if those links are low-quality or spammy.

Finally, stay vigilant and adaptable. The SEO landscape changes fast, and strategies that worked a year ago may now be outdated. Regularly conduct technical audits, revisiting these audits to stay aligned with best practices. Remember, your authority isn’t just built on backlinks or keywords but on a holistic, nuanced approach combining content, technical finesse, and ethical link-building.

Have you ever fallen into this trap? Let me know in the comments.

Maintaining a strong SEO presence over time requires the right combination of tools, consistency, and strategic adjustments. One of the most powerful resources I rely on is Screaming Frog SEO Spider. I use it weekly to crawl my entire website, identifying crawl errors, duplicate content, and indexing issues. Its ability to visualize site architecture helps me pinpoint structural bottlenecks that might hinder my SEO efforts. Alongside, I utilize Google Search Console for real-time insights into how Google perceives my website, especially monitoring crawl stats and indexing status. Regularly reviewing this data ensures I catch issues early and adapt accordingly.

For technical SEO, I recommend PageSpeed Insights from Google. In my experience, optimizing site speed using their suggestions—like reducing server response times and optimizing images—not only improves user experience but also enhances my crawl efficiency. Building on that, I implement technical fixes suggested in industry guides, which have historically resulted in notable ranking jumps.

Content auditing tools such as help me identify high-performing articles and gaps in topic coverage. This consistent review allows me to refresh cornerstone content and make data-driven decisions about expanding or pruning topics. I believe that updating existing content, backed by insights from tools like SEMrush, is often more efficient than constantly producing new pieces.

Additionally, I automate routine tasks using Zapier. For example, I set up workflows that notify me of backlink profile changes or indexing irregularities, enabling swift action. As my backlink profile grows, I also leverage services like advanced backlink strategies to diversify and strengthen my backlink portfolio—aligning with predictions that quality over quantity continues to dominate in 2026 and beyond.

How do I keep my SEO tools and tactics effective over time?

Continuous learning and adaptation are vital. I stay updated through authoritative sources, like latest technical SEO insights, and regularly test new tools and techniques on a staging environment before rolling them out live. I also schedule quarterly audits to recalibrate my strategies, ensuring they align with evolving search engine algorithms and industry standards.

Looking ahead, I predict that integration of AI-driven analytics and automation will revolutionize how we maintain SEO health. Embracing these innovations now—such as experimenting with tools that provide AI-based content optimization—can give you a competitive edge. For example, exploring AI tools that analyze backlink relevance can help you prioritize high-impact link-building efforts effectively.

Want to stay ahead? Try implementing automated monitoring of your backlink profile using tools like advanced SEO tools. They can save you time and help maintain your site’s authority in the long run. Remember, the key to sustained SEO success is proactive management coupled with strategic tool usage—so start refining your process today and watch your rankings flourish.

Reflecting on my journey within the ever-evolving world of SEO, one profound lesson stands out: technical mastery combined with authentic backlink strategies can transform invisible content into search engine magnetism. I once believed that compelling content alone was enough, but I soon learned that without a solid technical foundation and quality backlinks, even the best pages could remain in the shadows. Recognizing this shifted my entire approach, revealing the nuanced dance between behind-the-scenes optimization and on-page elements that truly drive rankings. Embracing both facets has been my game-changer, turning overlooked pages into authority hubs and boosting organic traffic significantly.

The Hidden Gems of SEO: Lessons Few Talk About

- Technical precision beats guesswork: Simple fixes like optimizing crawl budget and fixing duplicate content can unlock a floodgate of indexation.

- Backlinks are more than just links: Quality, relevance, and context matter more than sheer quantity. Natural, human-built backlinks create lasting authority.

- Structured data isn’t optional: Proper schema markup helps search engines understand your content deeply, resulting in featured snippets and rich results.

- Speed and performance are trust signals: A fast-loading site, optimized with tools like these tips, encourages crawlers and users alike to engage more.

- Continuous vigilance pays off: Regular audits with tools like Search Console and Screaming Frog keep your SEO health in check and your rankings climbing.

My Essential Arsenal for SEO Success

- Screaming Frog SEO Spider: For in-depth crawling and identifying technical issues with precision. It visualizes site architecture, exposing hidden bottlenecks.

- Google Search Console: The primary window into how Google perceives your site—crawling, indexing, and performance data all in one place.

- PageSpeed Insights: Speed is king; these insights help tailor technical fixes that improve load times, user experience, and crawl efficiency.

- SEMrush’s Content Analyzer: To assess content performance, identify gaps, and guide refresh strategies for cornerstone content that builds authority.

Pursue Growth With Purpose and Passion

Embarking on the path of SEO optimization is a commitment to continuous learning and adaptation. Embrace the challenge of technical fixes, cultivate high-quality backlinks, and prioritize user experience through speed and structured data. Remember, the most impactful strategies are rooted in genuine value and technical integrity. Take proactive steps today—review your site’s health, refine your backlink profile, and stay curious about emerging tools and tactics. The rewards are not just rankings but building a resilient digital presence that stands the test of time. What’s one technical SEO tweak you’re eager to implement this week to elevate your content’s visibility? Share your plan below and let’s grow together.